Transforming an infinite horizon problem into a Dynamic Programming one

This video shows how to transform an infinite horizon optimization problem into a dynamic programming one. The Bellman equation or value function is ...

Constantin Bürgi

Lecture 5: Search 1 - Dynamic Programming, Uniform Cost Search | Stanford CS221: AI (Autumn 2019)

Topics: Problem-solving as finding paths in graphs, Tree search, Dynamic programming, uniform cost search Percy Liang, Associate Professor & Dorsa Sadigh, ...

stanfordonline

Reinforcement Learning: Hidden Theory and New Super-Fast Algorithms

Sean Meyn, University of Florida https://simons.berkeley.edu/events/reinforcement-learning-hidden-theory-and-new-super-fast-algorithms.

Simons Institute

Approximate Dynamic Learning - Dimitri P. Bertsekas (Lecture 1, Part A)

Prof. Bertsekas at the KIOS Distinguished Lecture Series On the 18th of September 2017, the KIOS Research and Innovation Center of Excellence (CoE) ...

KIOS CoE

19. Dynamic Programming I: Fibonacci, Shortest Paths

MIT 6.006 Introduction to Algorithms, Fall 2011 View the complete course: http://ocw.mit.edu/6-006F11 Instructor: Erik Demaine License: Creative Commons ...

MIT OpenCourseWare

Lecture 8: Markov Decision Processes - Reinforcement Learning | Stanford CS221: AI (Autumn 2019)

Topics: Reinforcement learning, Monte Carlo, SARSA, Q-learning, Exploration/exploitation, function approximation Percy Liang, Associate Professor & Dorsa ...

stanfordonline

Lecture 10: Game Playing 2 - TD Learning, Game Theory | Stanford CS221: AI (Autumn 2019)

Topics: TD learning, Game theory Percy Liang, Associate Professor & Dorsa Sadigh, Assistant Professor - Stanford University http://onlinehub.stanford.edu/ ...

stanfordonline

Lecture 19 (Bellman Eq.)

Learning Theory (Reza Shadmehr, PhD) Introduction to optimal feedback control. Bellman's equation.

JHU Learning Theory

Reinforcement Learning (SS20) - Lecture 3 - Dynamic Programming

Humans to Robots Motion Research Group

Grad Course in AI (#11): Markov Decision Processes

Dr. Mausam (University of Washington) teaches Markov Decision Processes: MDP models (discounting, horizons, cost/reward), solutions to MDPs including ...

Mausam

Stochastic programming, dynamic programming and their use in climate change economics

Lecture given by THOMAS RUTHERFORD, University of Wisconsin-Madison, USA This lecture provides a brief introduction to modeling tools appropriate to ...

ICCGOV

Stuart Russell: "Probabilistic programming and AI"

PROBPROG Conference

Every Optimization Problem Is a Quadratic Program:...

Sean Meyn (University of Florida) https://simons.berkeley.edu/talks/tbd-189 Theory of Reinforcement Learning Boot Camp.

Simons Institute

Markov Chain Compression (Ep 3, Compressor Head)

Markov Chains Compression sits at the cutting edge of compression algorithms. These algorithms take an Artificial Intelligence approach to compression by ...

Google Developers

RL Course by David Silver - Lecture 2: Markov Decision Process

Reinforcement Learning Course by David Silver# Lecture 2: Markov Decision Process #Slides and more info about the course: http://goo.gl/vUiyjq.

DeepMind

Richard Murray - CS+Biology - Alumni College 2016

"Synthetic Biology: Programming Cellular Behavior Using DNA" Richard Murray (BS '85), the Thomas E. and Doris Everhart Professor of Control and Dynamical ...

caltech

Lecture 1: Overview | Stanford CS221: AI (Autumn 2019)

Topics: Overview of course, Optimization Percy Liang, Associate Professor & Dorsa Sadigh, Assistant Professor - Stanford University ...

stanfordonline

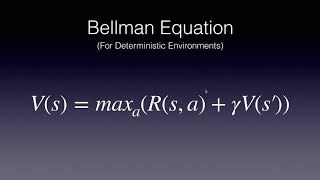

Bellman Equation Basics for Reinforcement Learning

An introduction to the Bellman Equations for Reinforcement Learning. Part of the free Move 37 Reinforcement Learning course at The School of AI.

Skowster the Geek

Intro to Dynamic Programming-1

This project was created with Explain Everything™ Interactive Whiteboard for iPad.

Tim Kearns

Stochastic Programming Approach to Optimization Under Uncertainty (Part 1)

Alex Shapiro (Georgia Tech) https://simons.berkeley.edu/talks/tbd-186 Theory of Reinforcement Learning Boot Camp.

Simons Institute

introduction to Markov Decision Processes (MFD)

This is a basic intro to MDPx and value iteration to solve them..

Francisco Iacobelli

JuliaCon 2017 | Decision Making under Uncertainty | Mykel Kochenderfer

Visit http://julialang.org/ to download Julia.

The Julia Programming Language

Graphs and Networks | MIT 18.06SC Linear Algebra, Fall 2011

Graphs and Networks Instructor: Nikola Kamburov View the complete course: http://ocw.mit.edu/18-06SCF11 License: Creative Commons BY-NC-SA More ...

MIT OpenCourseWare

Session 3. Andrzej Ruszczyński: Risk quantification and control

Title: Risk quantification and control: Challenges and opportunities Abstract: At first, we shall identify strategic research directions in modern systems ...

IIASA

MIT CompBio Lecture 05 - HMMs Hidden Markov Models II (Fall'19)

MIT Computational Biology: Genomes, Networks, Evolution, Health http://compbio.mit.edu/6.047/ Prof. Manolis Kellis Full playlist with all videos in order is here: ...

Manolis Kellis

Geometric Insights into the convergence of Non-linear TD Learning Joan Bruna

Seminar on Theoretical Machine Learning Topic: Geometric Insights into the convergence of Non-linear TD Learning Speaker: Joan Bruna Affiliation: New York ...

Institute for Advanced Study

Mehran Sahami: How can you make the best possible decisions?

How do you make decisions in your daily life? Many people make seemingly reasonable, yet ultimately irrational decisions. However, we can learn to make ...

Stanford University School of Engineering

35C3 - Information Biology - Investigating the information flow in living systems

https://media.ccc.de/v/35c3-9734-information_biology_-_investigating_the_information_flow_in_living_systems From cells to dynamic models of biochemical ...

media.ccc.de

Reinforcement Learning in the Presence of Nonstationary Variables with Simon Ouellette

Conventional reinforcement learning is difficult, perhaps impossible to use "as is" in the context of financial trading, due to the presence of time-varying ...

Quantopian

Introduction to Molecular Dynamics Simulations

This online webinar shared an introduction to Molecular Dynamics (MD) simulations as well as explored some of the basic features and capabilities of LAMMPS ...

WestGrid

CS 285: Lecture 2, Part 1

RAIL

Dimitri Bertsekas: Stable Optimal Control and Semicontractive Dynamic Programming

This distinguished lecture was originally streamed on Monday, October 23rd, 2017. The full title of this seminar is as follows: Stable Optimal Control and ...

UTC Institute for Advanced Systems Engineering

Reinforcement Learning 6: Policy Gradients and Actor Critics

Hado Van Hasselt, Research Scientist, discusses policy gradients and actor critics as part of the Advanced Deep Learning & Reinforcement Learning Lectures.

DeepMind

Advanced Machine Learning Day 3: Neural Architecture Search

How do you search over architectures? View presentation slides and more at ...

Microsoft Research

Introduction to Molecular Dynamics

SimbiosOpenMM

On The Hardness of Reinforcement Learning With Value-Function Approximation

Value-function approximation methods that operate in batch mode have foundational importance to reinforcement learning (RL). Finite sample guarantees for ...

Microsoft Research

Symposium in Honor of Robert C. Merton - Day 2: Andrew Lo

Robert C. Merton: The First Financial Engineer by Andrew Lo (MIT). Symposium in Honor of Robert C. Merton, PhD '70 August 5-6, 2019 MIT Sloan School of ...

Finance at MIT

Discussion: Optimization

Moderator: Gergely Neu (UPF) https://simons.berkeley.edu/talks/tbd-228 Deep Reinforcement Learning.

Simons Institute

Reinforcement Learning 10: Classic Games Case Study

David Silver, Research Scientist, discusses classic games as part of the Advanced Deep Learning & Reinforcement Learning Lectures.

DeepMind

Lecture 9: Game Playing 1 - Minimax, Alpha-beta Pruning | Stanford CS221: AI (Autumn 2019)

Topics: Minimax, expectimax, Evaluation functions, Alpha-beta pruning Percy Liang, Associate Professor & Dorsa Sadigh, Assistant Professor - Stanford ...

stanfordonline

Lecture 3: Machine Learning 2 - Features, Neural Networks | Stanford CS221: AI (Autumn 2019)

Topics: Features and non-linearity, Neural networks, nearest neighbors Percy Liang, Associate Professor & Dorsa Sadigh, Assistant Professor - Stanford ...

stanfordonline

[PyEMMA 2018] Introduction to Markov state models

Frank Noé gives an introduction to Markov state modelling of molecular dynamics using the PyEMMA software. PyEMMA (EMMA = Emma's Markov Model ...

MarkovModel